Powder enables research in a broad range of categories, including Mobile Communication, Wireless Communication and RF monitoring. We provide some examples of ongoing research in each of these categories.

Wireless Communication:

Mobile Communication:

RF Monitoring:

Powder is a highly flexible, remotely accessible, end-to-end software defined platform supporting a broad range of wireless and mobile related research. (Paper providing an overview of the Powder platform.)

Powder is still being deployed, but the following capabilities are already available for use:

If your are using the POWDER platform for your research we ask that you use this POWDER citation.

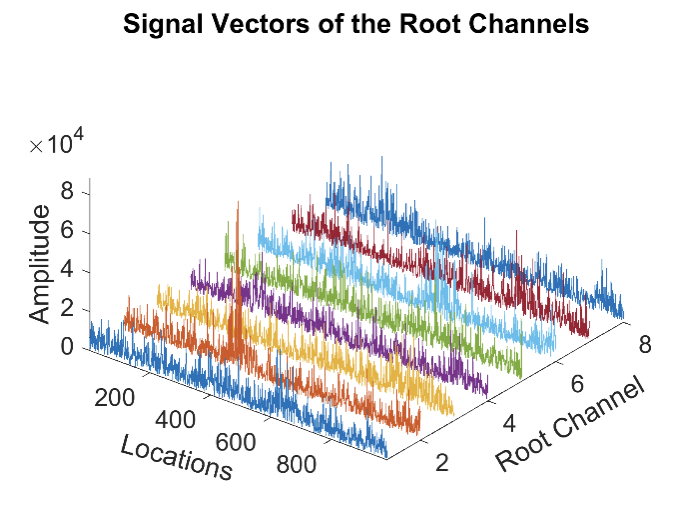

ZCNET is a novel Low Power Wide Area Network (LPWAN) technology that can meet the demands of the Internet of Things (IoT) applications in network capacity, data rate, and range. At the Physical (PHY) layer, ZCNET modulates data on both the location and phase of the peak generated by the Zadoff-Chu (ZC) sequence, which inspired its name. At the Medium Access Control (MAC) layer, ZCNET adopts ALOHA, because the Access Point (AP) is capable of decoding tens of simultaneous uplink transmissions, such as the signal shown in the figure on the right. ZCNET has produced very encouraging results and is expected to outperform existing LPWAN technologies such as LoRa, Sigfox, RPMA, Weightless, etc.

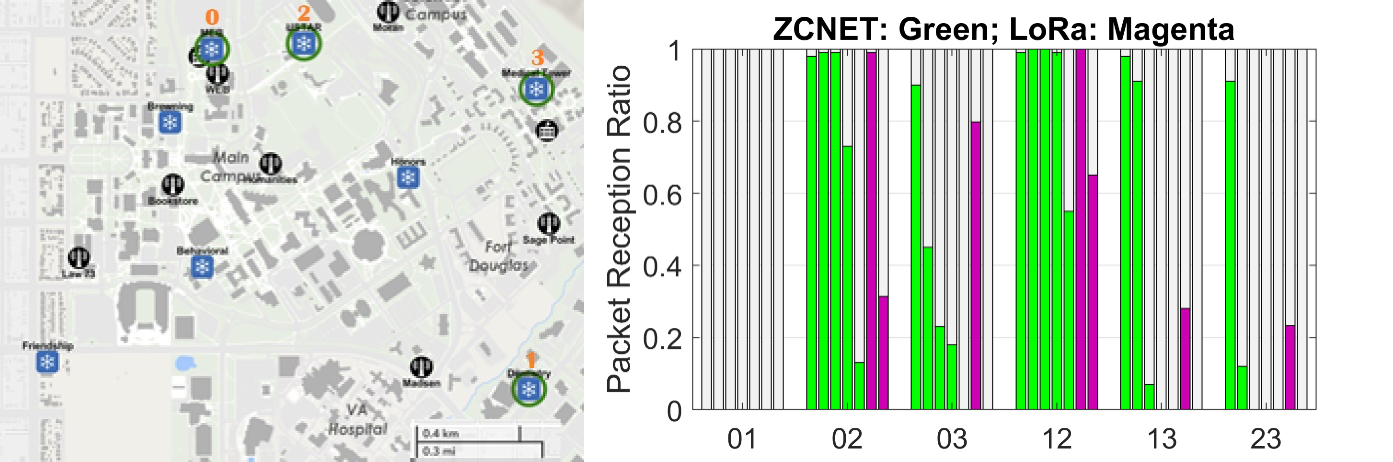

The physical layer of ZCNET has been tested on POWDER, and the results confirm that ZCNET achieves longer communication range than LoRa. The figure on the right shows the locations of the transceivers used in the experiments. Six links were tested, including some long links such as link 0-1 and link 1-2 that were over 2 km. For ZCNET, five data rates, namely, MCS A to MCS E, were tested. For LoRa, two data rates, namely, SF 12 and SF 9, were tested. As shown in the figure, ZCNET MCS A (the leftmost green bar) achieves higher Packet Receiving Ratio (PRR) than LoRa SF 12 (the leftmost magenta bar) even with a higher data rate while using less bandwidth.

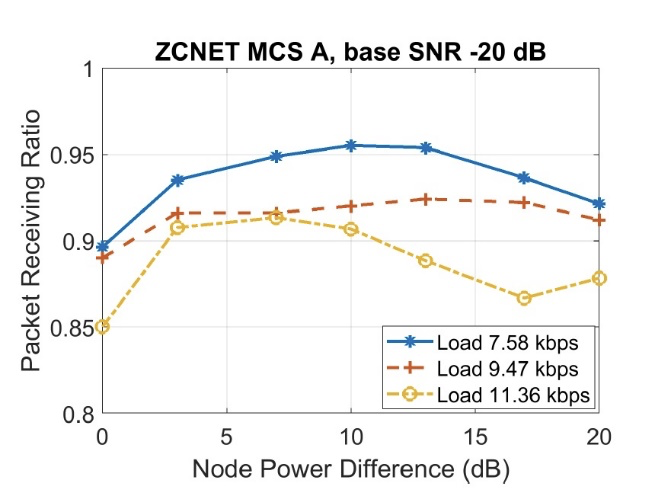

The network-wide uplink performance has been tested with simulations. The figure on the right shows the capacity of ZCNET under the most challenging channel conditions, where nodes have to use the lowest data rate. It can be seen that the PPR is above 0.9 for load 9.47 kbps, translating to over 40 simultaneous transmissions on average. In contrast, LoRa with SF 12 has a Physical layer data rate of 0.209 kbps, which is an upper bound on its network capacity in this setting.

In the near future, the entire protocol stack of ZCNET will be implemented and tested on POWDER. With POWDER, many issues, e.g., the capability of the AP to decode tens of simultaneous transmissions, and the interactions between uplink and downlink, can be tested in real-time over diverse long-distance links in the real-world.

More information on this work is available from the ZCNET project site

Ever increasing use of mobile and wireless devices implies increased demand for wireless spectrum. Unfortunately the amount of usable wireless spectrum is finite, which has led to research into dynamic spectrum sharing efforts over the last decade. For example, in the US these efforts have resulted in lease-based spectrum sharing approaches in the 3.5 MHz band. While a significant step forward, these spectrum sharing efforts are, however, fairly coarse grained, from the perspective of the granularity (time and spectrum wise) at which spectrum is shared.

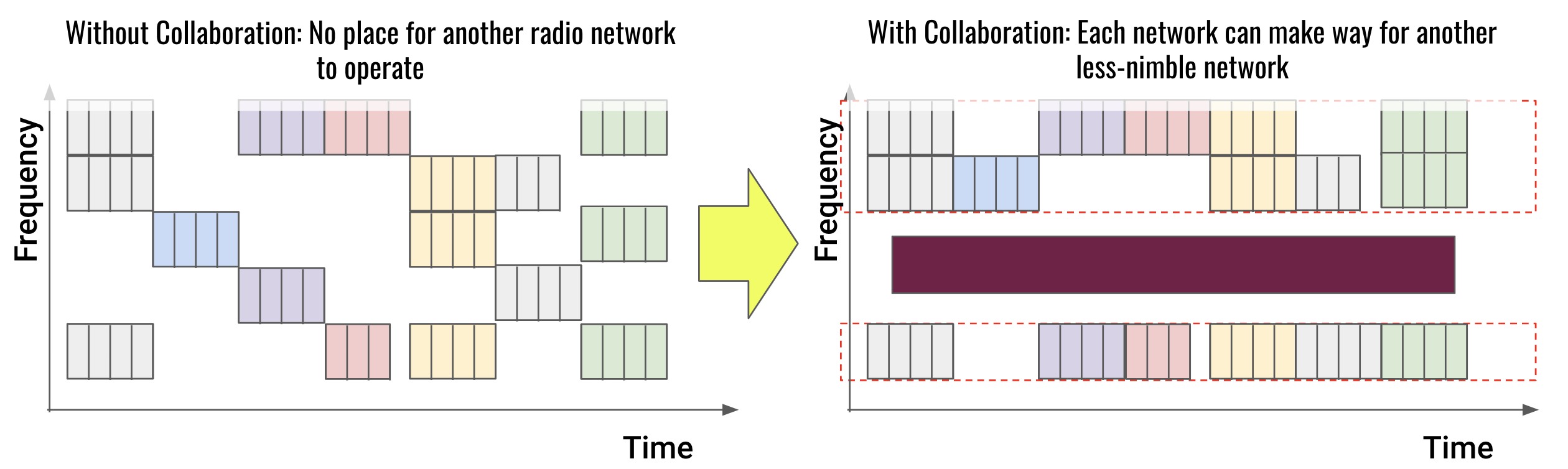

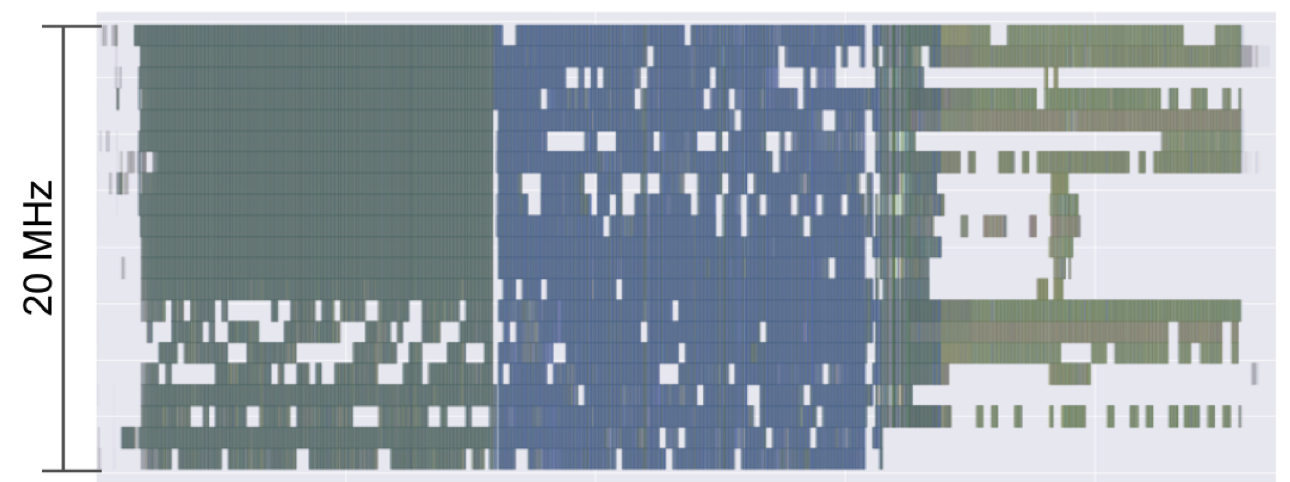

The recently completed DARPA Spectrum Collaboration Challenge (SC2) explored more fine-grained and collaborative approaches to spectrum sharing. Specifically, SC2 competitors used artificial intelligence, machine learning and software-defined radio technologies to realize autonomous and collaborative spectrum sharing approaches. (The figure above illustrates the benefit of a collaborative spectrum sharing approach.)

The Powder end-to-end software-defined infrastructure provides an ideal environment in which to explore the technologies developed through the DARPA SC2 program in a real-world setting. Like the Colosseum infrastructure on which the SC2 competition took place, POWDER has software-defined radios and accompanying mobile software stacks, which allows great flexibility in exploring next generation spectrum sharing.

Zylinium Research, one of the top three teams in the DARPA SC2 competition is being funded by the US Department of Defense to continue their spectrum sharing research on the Powder platform. (The figure shows spectrum use by the Zylinium Research team during one of the SC2 competitions.) Zylinium team is developing a new overlay capability in the CBRS band for 5G networks called the Zylinium Spectrum Exchange (ZSE). The ZSE coordinates spectrum usage at the scale of 5G resource blocks that are 180kHz by 1ms. The ZSE is not meant to replace any existing Spectrum Access System (SAS) governing CBRS spectrum across different tiers of users: Incumbents, Priority Access Licenses (PAL) users, and General Authorized Access (GAA) users. Instead, it is conceived to allow more spectrum sharing within these tiers, creating greater spectral efficiency while minimizing interference.

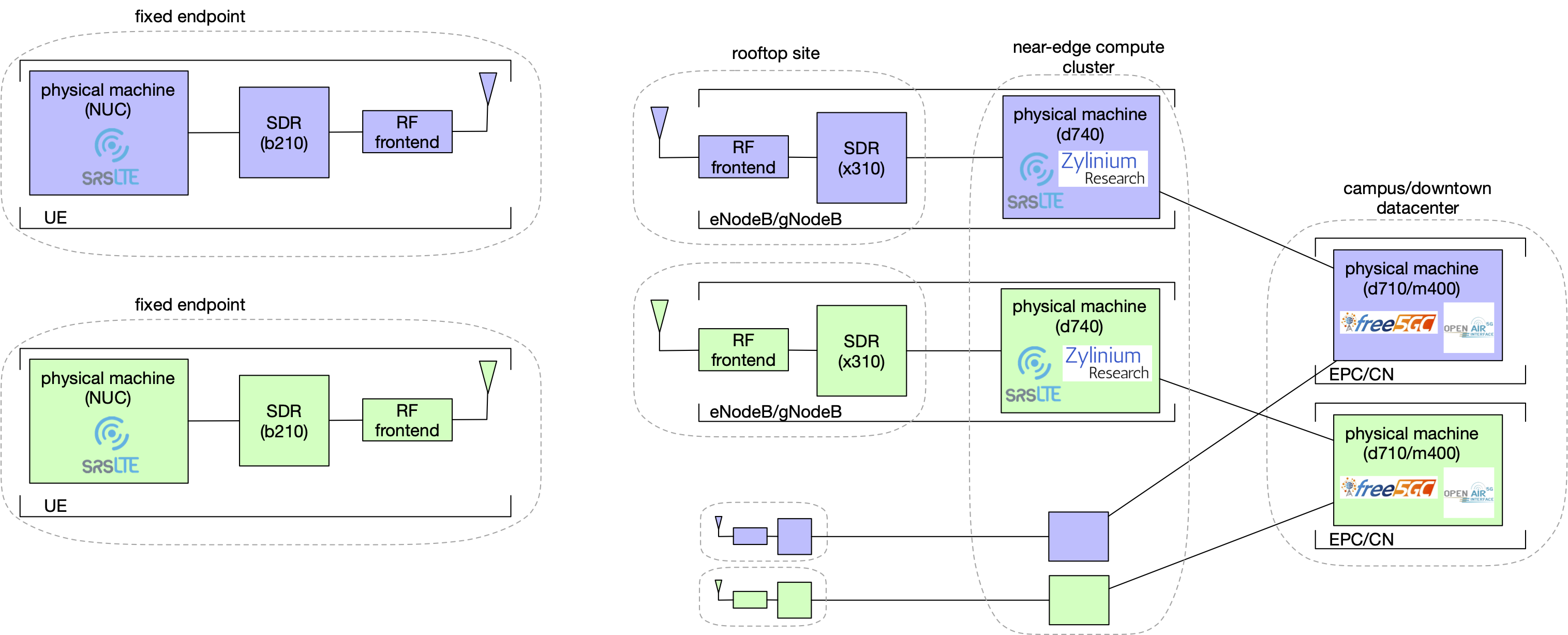

The figure shows the mapping to platform resources for the Zylinium team's initial Powder work.

The figure shows the mapping to platform resources for the Zylinium team's initial Powder work.

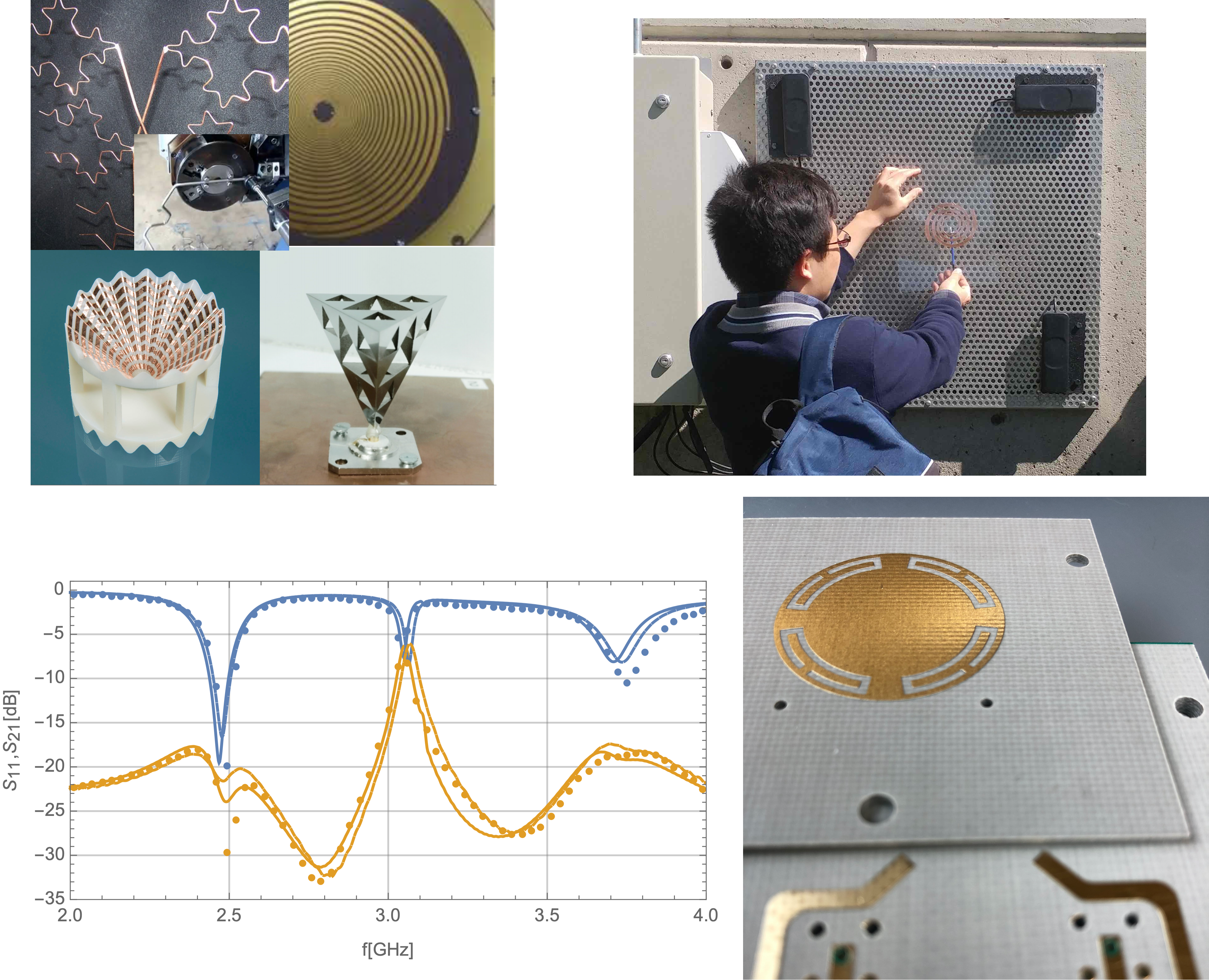

One of the unique goals of the Powder platform is to support electromagnetic design of antennas as an integral part of wireless network experimentation. We plan to support user-supplied antenna designs, with relatively rapid deployment onto the platform, from CAD to deployment in perhaps as little as one week. We can assist with: (i) electromagnet design validation using industry-standard computation electromagnetics solvers, (ii) interfacing with the fabrication vendor, (iii) network analysis testing in an anechoic chamber, and (iv) antenna integration onto the Powder platform hardware. Rapid prototyping methods supported can include: 3D printing, circuit board lithography or micro-machining, and CNC wire bending.

Current antenna research includes development of dual-band antenna array for the POWDER-deployed massive-MIMO platform. The goal is to provide users instantaneous access to an LTE band near 2.5 GHz and the CBRS band, 3.55 - 3.7 GHz. These two bands will provide significant discrimination in scattering, path-loss, and general RF environment, enabling the testing of coding strategies and algorithms for RF robustness.

Rather than relying on traditional antenna performance parameters, such as S-parameters, directivity and radiation efficiency, proposed designs are being evaluated and optimized using system level metrics. Maximizing mean spectral efficiency over a statistical ensemble of user endpoint locations and scattering-centers allows one to automatically make quantitatively efficient trade-off decisions between: pattern, polarization, inter-channel coupling, and radiation efficiency. Optimal design parameters obtained with system level metrics also allow quantitative balancing of the performance across two (or more) bands.

The figure (clockwise from top left) shows example prototype antennas, an antenna designed during POWDER-RENEW Mobile and Wireless Week (MWW2019) being connected to a fixed-endpoint node for testing, a dual-band antenna design and corresponding S11 and S21 plots.

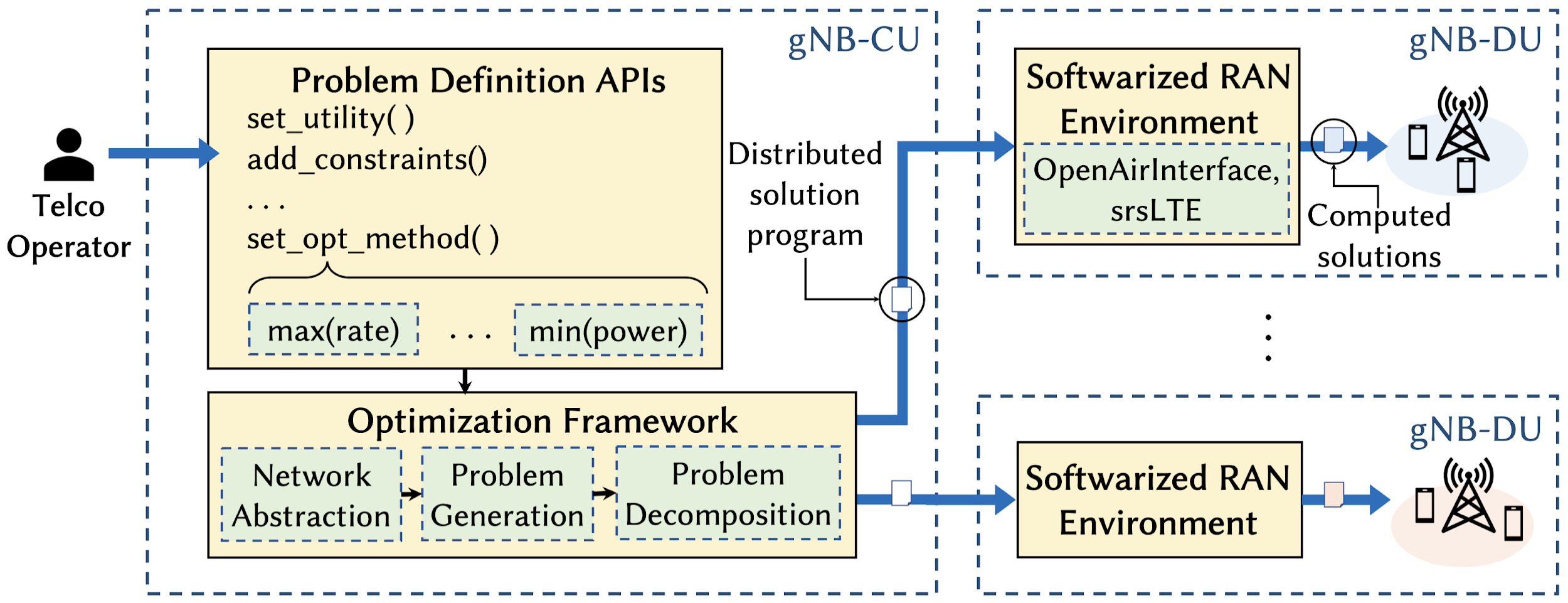

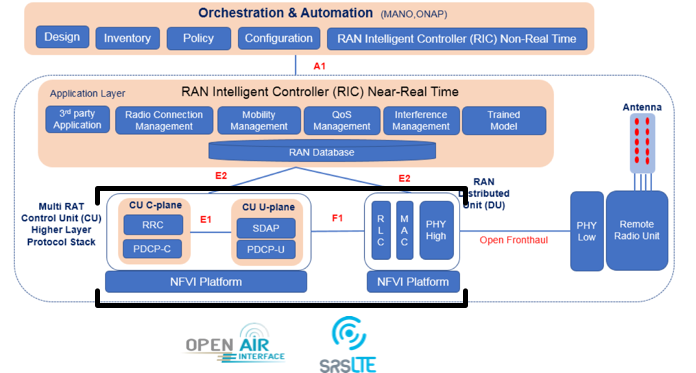

The CellOS work focuses on automated softwarization and self optimization of future 5G networks, by realizing the first zero-touch software framework for next-generation cellular networks. Like an operating system interfacing hardware and software functions (hence the name), CellOS flexibly bridges Software-defined Networking (SDN) with cross-layer distributed optimization techniques for the cellular architecture. We push the SDN paradigm beyond the traditional separation of control and data planes, in that we also decouple control from optimization, adding further and unprecedented flexibility. Responding fully to ETSI requirements and industry interests, CellOS enables zero-touch control and optimization of low-level network functionalities by providing Telco Operators (TOs) with an efficient, automated, modular, and flexible network control platform. Specifically, CellOS (i) allows TOs to define centralized and high-level control objectives (e.g., “maximize network throughput”) without requiring expertise in cross-layer optimization theory or knowledge of network specifics; (ii) provides a general virtual network abstraction that shields the TO from the complexity of a sophisticated framework by abstracting network infrastructure and parameters, including those known at run-time only (e.g., user-to-base station associations and channel information); (iii) automatically converts high-level control directives into distributed cross-layer control programs to be executed at each network edge element, and (iv) enables zero-touch optimization of distinct control objectives on different network slices coexisting on the same infrastructure.

The figure on the right illustrates the overall structure of CellOS, exemplified for the 3GPP network architecture. The upper-left side of the figure depicts the high level APIs that the TO can use to define the network control objectives. On the bottom we indicate the components of the framework for automatic generation of the optimization problems and their decomposition into control programs. In a 3GPP scenario this unit corresponds to the gNB-CU, a logical node primarily concerned with control decisions at larger time-scales. On the right, we describe the softwarized Radio Access Network (RAN) that will execute the generated programs. In the 3GPP context, this task would be carried out by the gNB-DU, a logical node that makes time-sensitive decisions involving the lower layers of the protocol stack, and that is interfaced with the gnb-CU.

We have prototyped CellOS on heterogeneous LTE-compliant testbeds, with two different implementations of the LTE stack (OpenAirInterface and srsLTE), and on the long-range POWDER platform. We show that CellOS is transparent to the use of network slicing technologies, enabling TOs to simultaneously optimize different network functions on distinct network slices. Results of the comparative performance evaluation of CellOS and prevailing baseline solutions show that using our framework remarkably improves key performance metrics, such as throughput (up to 86%), energy efficiency (up to 84%) and user fairness (up to 29%). To the best of our knowledge this is the first such demonstration, paving the way to the independent management of optimized network slices in 5G systems.

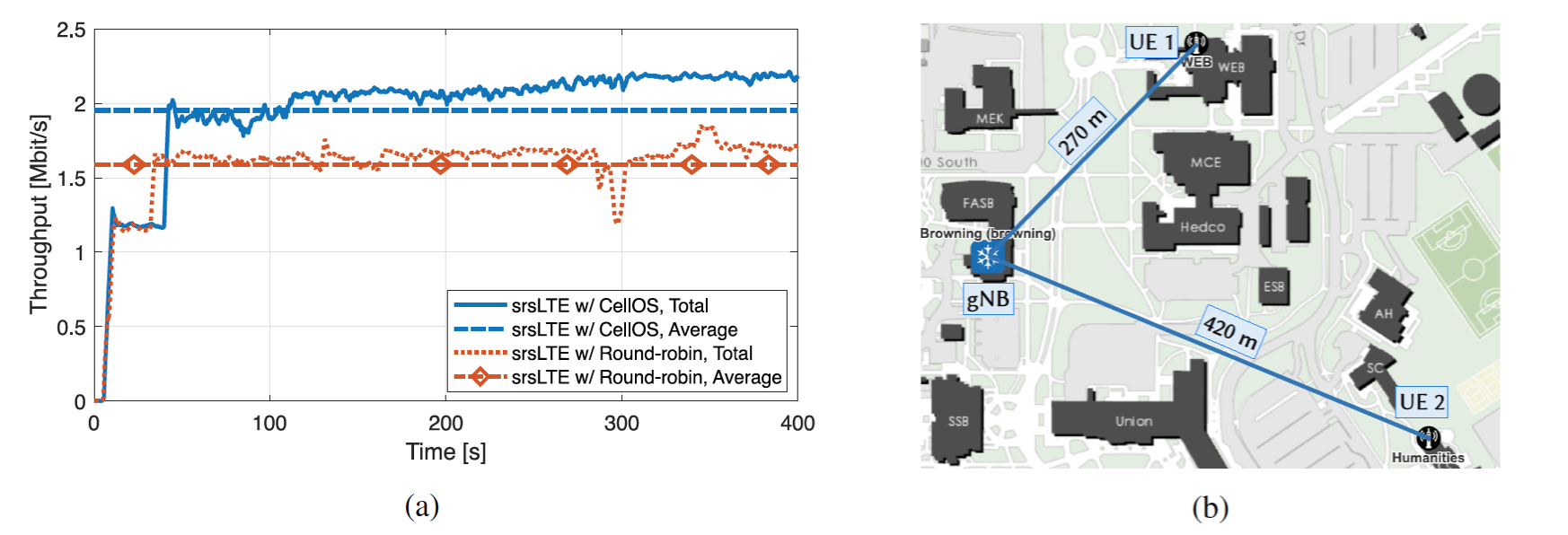

We demonstrate the platform- and RAN-independence of CellOS by running long-range experiments on one of the PAWR wireless platforms. Specifically, we leverage the POWDER platform and the 5G implementation of srsLTE to deploy a NR gNB and 2 UEs in an authentic outdoor wireless environment. The gNB employs a USRP X310 located on the rooftop of a 28.75 m-tall building, while we use ground-level USRP B210 as UEs. The two UEs are distant 270 m and 420 m from the gNB (see Figure (b)). Figure (a) shows the throughput gains achievable by running CellOS rate maximization on top of srsLTE, which uses a round-robin scheduler when instantiated without CellOS.

More information on this work is available in the full CellOS paper and from the WiNES and WINGS Lab pages.

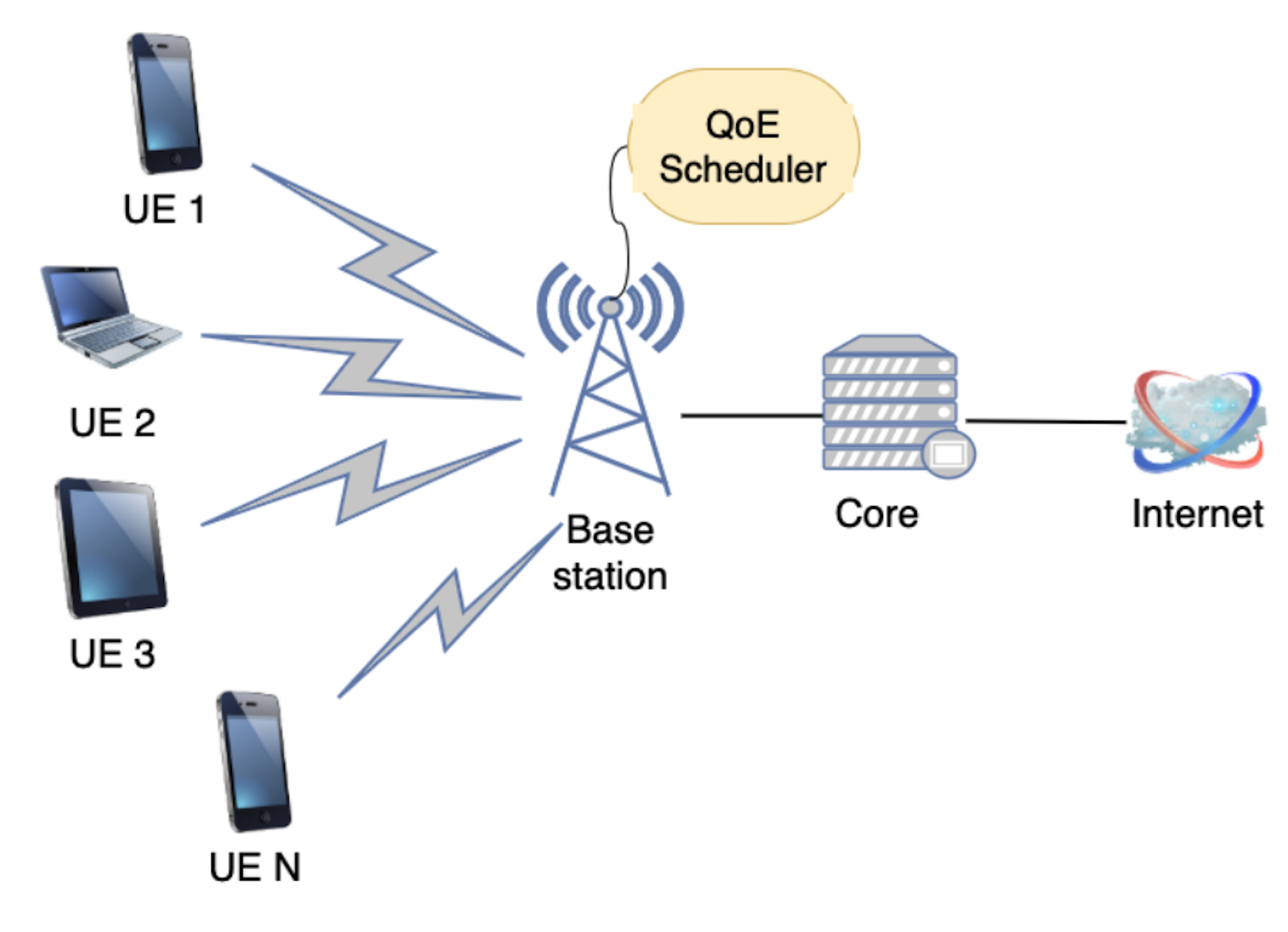

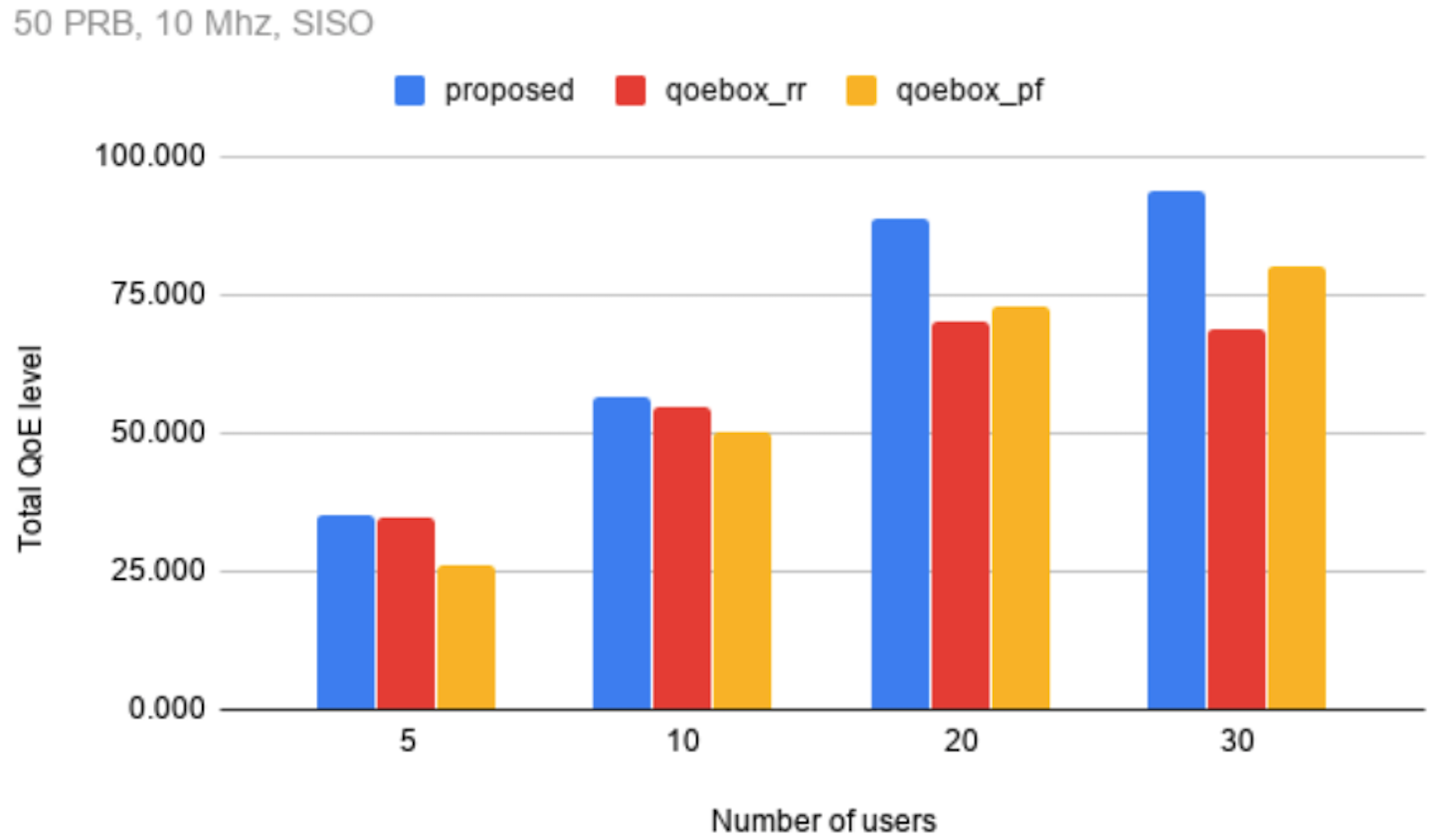

Network quality-of-service (QoS) based resource distribution schemes may fail to deliver decent quality-of-experience (QoE) to users. Recently, QoE-aware solutions are starting to emerge for various application specific domains. In mobile networks, however, QoE of users suffer due to lack of application awareness at base stations.

To address this problem, we explore a set of methods for leveraging higher-layer application and network features at the scheduling stage. As the core component in this study, we propose QMacs, a QoEaware radio resource scheduler for cellular base stations. It adopts a greedy resource allocation algorithm with a goal of maximizing total QoE and maintaining proportional fairness among users. We tested our system using simulation, and the POWDER testbed. Experiments show that QMacs can increase QoE (during 2 minutes long DASH ABR video streaming) up to 28% while not violating fairness index.

The broad industry trend towards software defined networks is also fundamentally changing the manner in which mobile and wireless networks are being built. The ability to "softwarize" the radio access network (RAN) is of particular interest as it enables innovation in the RAN/multi-access edge compute (MEC)/ultra-low-latency domains. RAN programmability efforts have evolved from early research efforts to more ambitious industry efforts, such as the O-RAN Alliance that is undertaking broad standardization and development in this area.

Given its flexibility, the Powder platform is ideally suited to explore RAN programmability. We have a number of ongoing efforts in this space, including the following:

Increased use of mobile and wireless devices has caused increased demand for wireless spectrum. In some sectors, specifically mobile communication, the increased demand for spectrum is manifest through ever increasing numbers of mobiles devices. What is not clear, however, is the extend to which spectrum is actually being used across the overall spectrum range. We performed an initial study to investigate actual spectrum usage in the sub-6GHz range. We further investigated whether spectrum usage can be predicted using machine learning methods. (The details of this work can be found in the MS Thesis titled “Spectrum Usage Analyses and Prediction Using LSTM Networks,” by Anneswa Ghosh, School of Computing, University of Utah.)

We specifically study spectrum usage in the frequency range 700 MHz to 2.8 GHz in Salt Lake City, Utah. Our study indicates that several portions of these frequencies are under-utilized. Furthermore, we observe that certain frequency bands demonstrate clear usage patterns, e.g., show higher utilization during the daytime compared to night-time; suggesting this behavior can be exploited for opportunistic secondary usage of the spectrum.

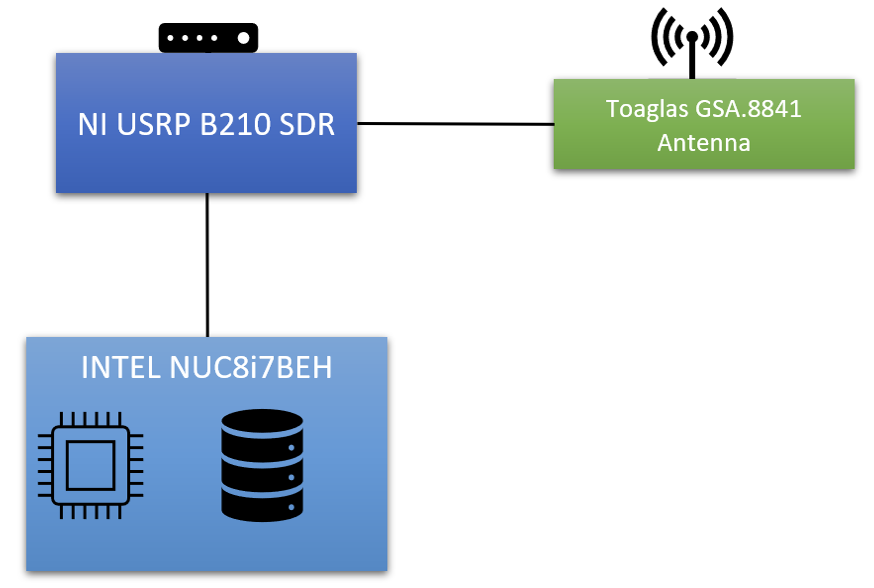

Our spectrum usage data is obtained using the Receive-only Fixed Endpoint deployment in the Powder platform. The Fixed Endpoint experiment setup consists of an ensemble of Software Defined Radio (SDR) equipment from NI, and a compute node. The Fixed-Endpoint equipment used for this study is NI USRP B210 SDR with its ports connected to a dedicated Taoglas GSA.8841 wideband I-bar antenna. This antenna has a frequency range of 698-6000 MHz and has an approximately -2 dBi average gain across the range. The USRP is also connected to an Intel NUC, a small form factor compute node, via USB 3.0.

The SDR device is accessed using the Python API provided by the USRP Hardware Driver (UHD). We use this API is to set the receive gain and to acquire I/Q samples from a specific channel at a specified sample rate. The measured frequency range is divided into bands, each with a width of 30MHz. Each 30MHz band is further divided into 200 points such that the distance between two consecutive frequency points is 150KHz. These frequency points are represented by the center frequency of the 150KHz wide channel. The raw data collected for each frequency point is the signal power computed at the USRP. We scan the frequency bands acquiring raw I/Q samples at a sample rate of 30MHz and processing the samples to compute log power for 150KHz bins.

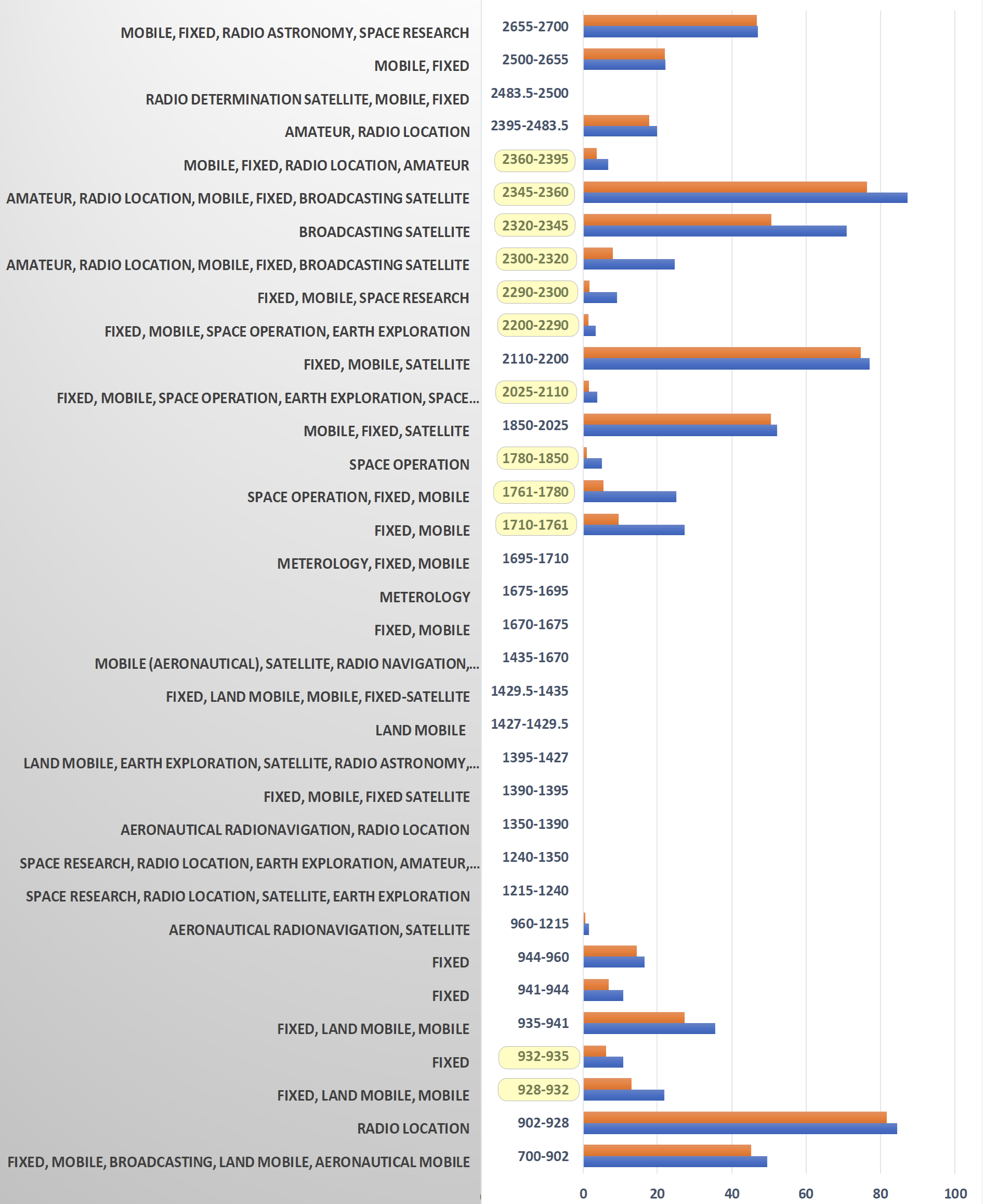

We collect spectrum data for five days from March 8th, 2020, 11:00 PM to March 13th, 2020, 11:00 PM. The spectrum usage in the frequency range of 700 MHz to 2800 MHz, along with the spectrum allocation categories by the US Department of Commerce, is shown on the right.

Observations:

Spectrum prediction allows predicting the signal power and hence the occupancy state of a channel in future time slots. Without spectrum prediction, an opportunistic secondary user (OU) of the spectrum must perform spectrum sensing for a large set of frequencies and determine the presence of a signal in each of them before actually using any available frequency to transmit. Spectrum prediction also allows OU to select the best channel that has the highest potential to be available when predicted by different mechanisms. We consider a wireless communication system where transmissions are performed in well-defined time slots as in a time-division multiplexed system. The OU needs to perform spectrum sensing and spectrum decision in each time slot before the transmission. Our motivation for spectrum prediction is based on the presence of temporal patterns that we observe in the spectrum usage data.

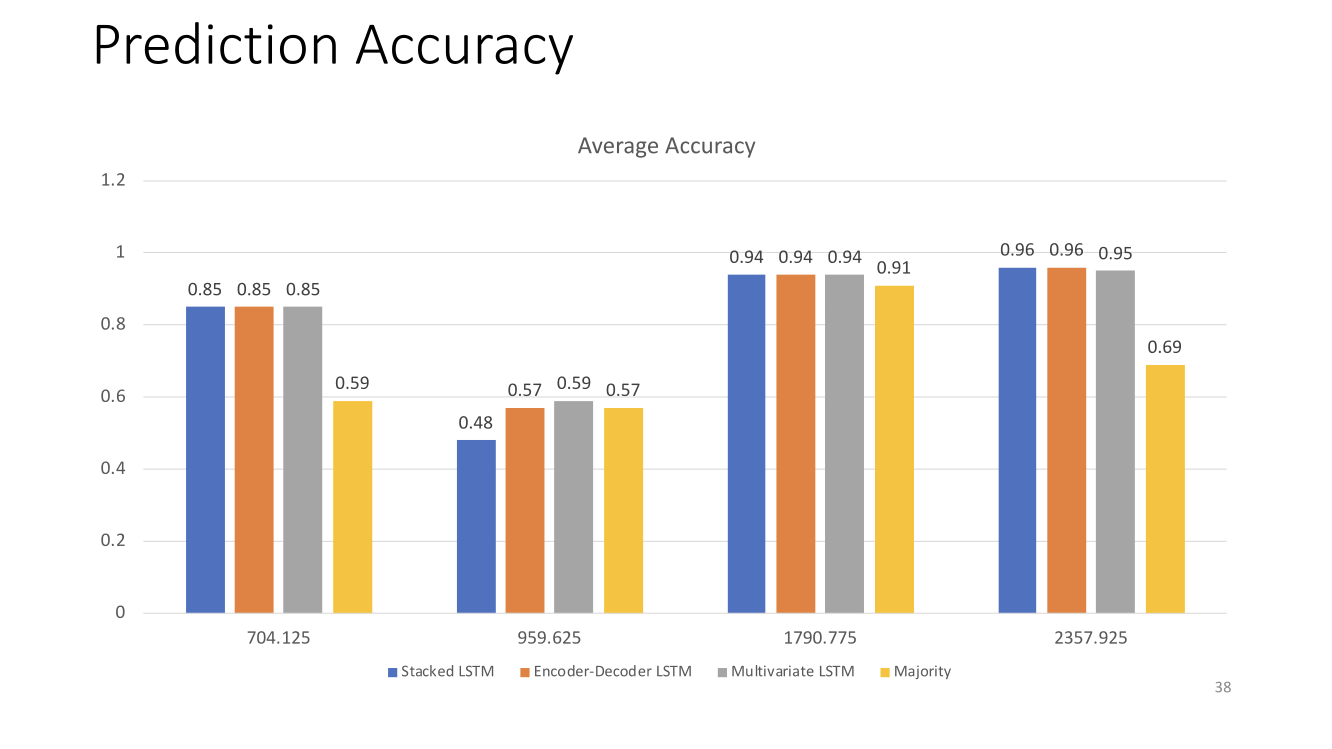

We propose a spectrum prediction system using Long Short-Term Memory (LSTM) neural networks to predict the occupancy of a channel in future time slots. We further introduce an LSTM based Window Selector to find the optimal window of future forecasts that increase the utilization of the network while minimizing the interference caused by the opportunistic user. Our experiments show that the Multivariate LSTM model can be reliably used to guide the choice of the channel for the opportunistic user. The figure on the right shows the prediction accuracy of our approach with different bands and different LSTM models.

Powder is capable of being used in large-scale repeatable channel measurement studies. In addition to being frequency-agile, the Powder platform has a large number of endpoints, both at rooftop height and at endpoint height. These can be reserved for use in a large channel measurement study, and can be repeatedly used to measure the same exact network in different weather / seasons, interference, and time-of-day conditions.

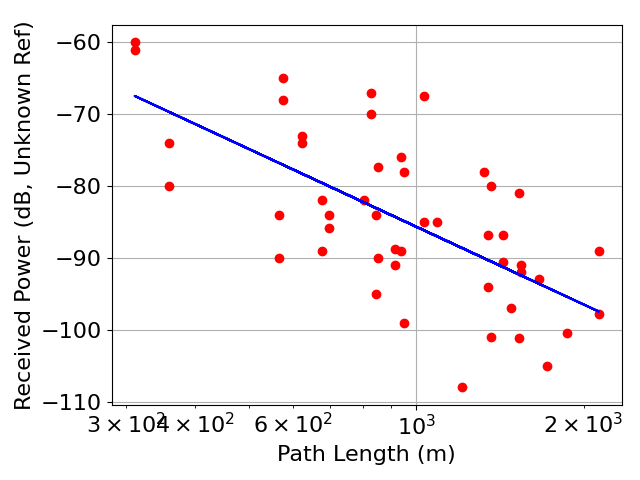

As an example, we use eight rooftop CBRS nodes, that is, the NI X310 on each rooftop node. We run an experiment as described at PathLossMeasurement.md. We use one rooftop node at a time as a transmitter, and measured received power within the other seven; then repeat with the next node as transmitter, and repeat until all 7*8 links are measured. We determine from that the measurements fit the path loss exponent model with an exponent of 3.6, that is, that the power decays proportionally to d-3.6, where d is the path length. Such path loss models are useful for cellular deployment planning. Improvements in path loss models can aid in automated development of better deployment plans, which then ensure sufficient SINR across a cellular network.

The figure shows the received power (red dots) vs. path length for 56 links between pairs of CBRS rooftop nodes. The dB received power is not calibrated, so is listed as referred to an unknown reference. The path loss exponent model for the measurements (blue line) has a path loss exponent of 3.6 and standard deviation of 8.5 dB.

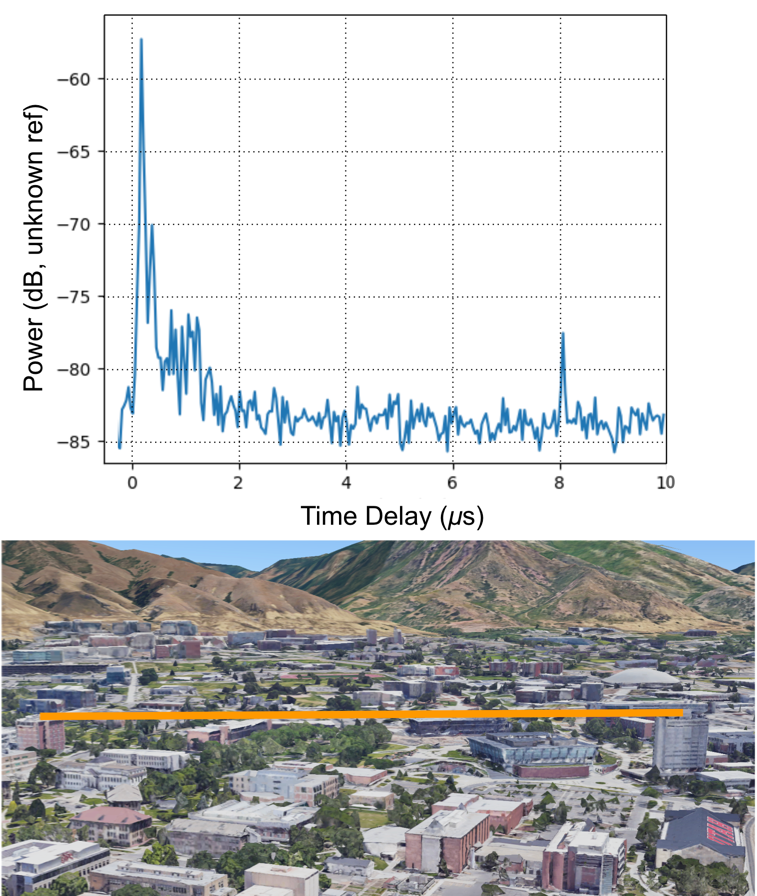

We can also conduct wideband channel impulse response (CIR) measurements, and use them to develop multipath models which then impact wireless system design. For example, we use a pseudo-noise (PN) signal transmitter and a correlation receiver to measure the CIR between two base stations in the figure below. As expected, we see multipath powers with exponentially decreasing magnitude as a function of excess time delay. We additionally see a multipath at 8 us with 20 dB less power than the first path, which may be attributed to a reflection from the mountains bordering campus.

The (Top) figure shows the channel impulse response estimate (blue line), and (Bottom) Google Earth view, of the link between the William Browning Building and Behavioral Science Building rooftop nodes. The path at 8 μs may be a reflection from the mountains.

Cellular operators know that weather changes cause disconnects attributed to changes in received signal power and interference power. Powder provides a unique platform to observe and model temporal changes at a variety of time scales, by measuring the same channel over seconds, minutes, hours, days, and seasons, which generally has not been observed or well modeled. We expect that the Powder platform will enable key new models which increase the reliability of 5G cellular and other future generation wireless systems.

Acknowledgement: Data for these figures came from participants in the 2020 NSF Research Experience for Undergraduates (REU) program.

Powder is uniquely capable of being used in localization research with its access to mobile and stationary endpoints, rooftop base stations, massive MIMO nodes, as well as its highly accurate time and frequency synchronization capabilities. Powder enables measurements of received power, angle, and time-of-arrival, and additionally enables scheduled transmission, user-defined transmit power and relative phase, which in combination, allows a broad segment of radio localization research.

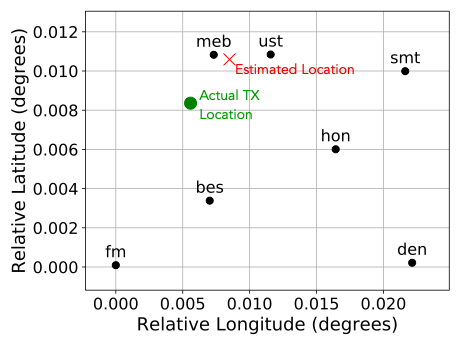

First, GPS coordinates of rooftop nodes and endpoints are available for all nodes in Powder. These coordinates can be used to find distances between nodes, or to plot on top of maps. For example, the figure shows the results of a basic Powder experiment testing transmitter localization using received power measurements. The experiment used seven rooftop nodes as receivers and one as a transmitter. The figure shows the locations of seven rooftop nodes (black dots), in latitude & longitude degrees relative to the Friendship Manor (fm) node. A received power-based localization algorithm provides a transmitter location estimate (X), vs. the actual transmitter location (green dot).

Second, for higher accuracy localization, Powder will have two systems for time and frequency synchronization of rooftop nodes. Each rooftop site currently has a GPS-disciplined oscillator driving an Ettus Research OctoClock, which provides 1 pulse-per-second (PPS) and 10 MHz signals to each software-defined radio (SDR) at the site. Further, the Powder team is evaluating Seven Solutions’ White Rabbit (WR) WR-ZEN TP-FL time synchronization system, which can provide sub-nanosecond level accuracy between rooftop nodes over fiber. WR was developed as an open collaboration to meet the time synchronization needs of large-scale physics experiments such as CERN. At this level of accuracy, time-of-arrival measurements at different rooftop sites could be accurately time-stamped such that errors from synchronization are on the order of 10 cm. Powder plans to use the PPS and 10 MHz signals derived from WR for all SDRs in each rooftop site. Thus the phase and time synchronization provides a predictable phase difference between the antennas in the system, such as the elements of the massive MIMO antenna array provided by RENEW. This stable, predictable phase difference could thus be used to estimate angle-of-arrival using measurements on multiple antenna elements. With the WR hardware fully deployed, the GPS-DO would act as a backup in case the WR signals are unavailable.

While no OctoClock currently is deployed at fixed endpoints or mobile endpoints, the POWDER team is developing a GPS-DO clock distribution network for these endpoints. One may perform localization at the endpoints using the synchronized transmissions from the rooftop nodes, or use time difference of arrival (TDOA) to locate transmissions from the endpoint nodes.

Time-based localization requires time information to be available to the localization application. Calls to the UHD library allow the clock source (internal oscillator vs. external clock) to be specified. Further, the UHD interface to each SDR allows transmission or reception to be scheduled w.r.t. the 1 PPS signal, and it also provides time stamp metadata to be recorded along with signal samples.

We expect that these capabilities will allow for highly accurate time-based localization across a variety of TDOA or time-of-arrival (TOA) localization systems, angle-of-arrival systems which require consistent phase across multiple antennas, and power-based localization systems.